Difference between revisions of "Data services"

From Lsdf

m |

|||

| (23 intermediate revisions by 6 users not shown) | |||

| Line 1: | Line 1: | ||

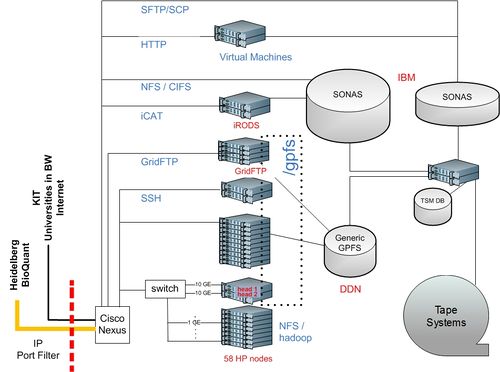

| + | [[File:hw_lsdf.jpg|500px|right]] |

||

| − | The services currently provided by LSDF are: |

||

| + | * Fast reliable storage acessible via standard protocols |

||

| + | * [[Archival services]] for long term access to data |

||

| + | * Cloud storage: [[S3 / REST]] |

||

| + | * [[Hadoop data intensive computing framework]] |

||

| + | * GridFTP [[Data Transfers]] |

||

| + | * HTTP/SFTP [[data sharing service]] |

||

| + | * [[GPU nodes (NVIDIA)]] |

||

| + | == Access and use of services and resources == |

||

| − | * Storage |

||

| + | * Request [[Access to Resources]] |

||

| − | * Cloud computing |

||

| + | * After access is granted, continue to [[registration]] |

||

| − | * Hadoop data intensive computing framework |

||

| + | * About [[LSDF Usage]] |

||

| − | |||

| + | * Acceptible [[Services Usage]] |

||

| − | The Hadoop cluster consists of 58 nodes with 464 physical cores, 36 GB of RAM and 2 TB of disk each. All nodes are however SHARED between the different Hadoop tasks and the OpenNebula virtual machines. |

||

Latest revision as of 10:27, 29 July 2016

- Fast reliable storage acessible via standard protocols

- Archival services for long term access to data

- Cloud storage: S3 / REST

- Hadoop data intensive computing framework

- GridFTP Data Transfers

- HTTP/SFTP data sharing service

- GPU nodes (NVIDIA)

Access and use of services and resources

- Request Access to Resources

- After access is granted, continue to registration

- About LSDF Usage

- Acceptible Services Usage